Historically breach data is useful for a few things, mostly because of haveibeenpwned and the narrative applied to that data, this username exists and the password or hash, so in turn you have to change your password but also be aware of an uptick in solicitations on the email address mentioned.

But we can do more with breach data

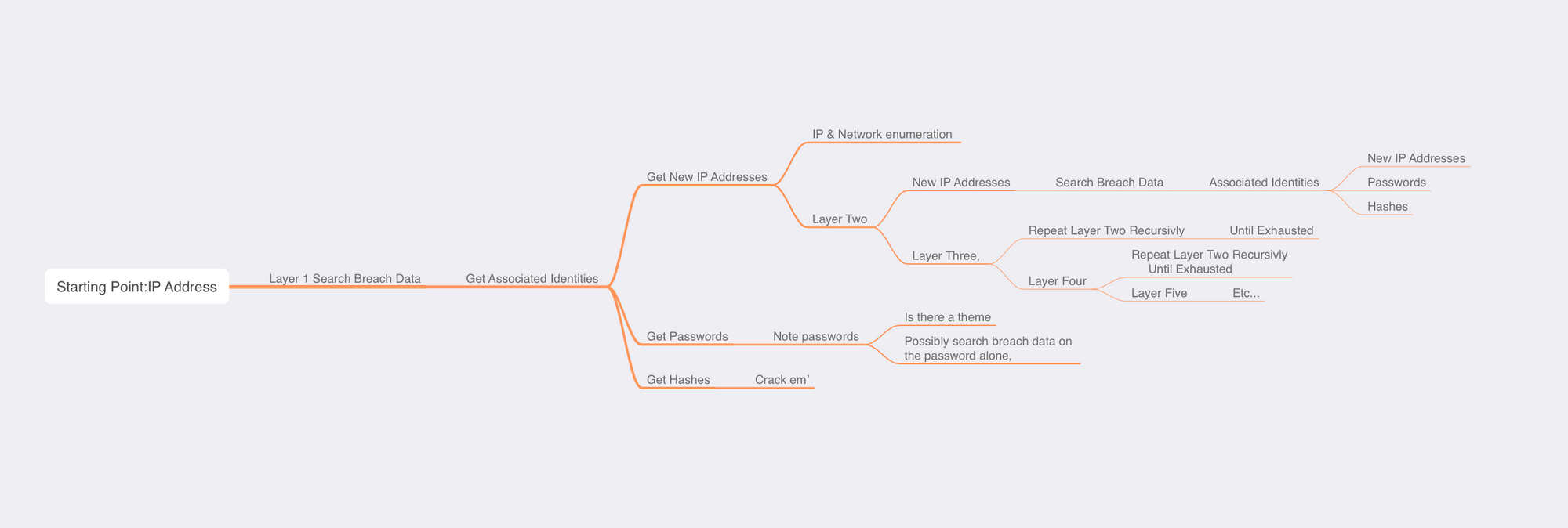

Breach data can include other information outside of username password or encrypted hash, some of it includes IP addresses... and there are a few things I like to do here; search for an actors IP address(s) across my data dumps and those offered by services (such as DeHashed - if you dont mind them seeing what you're looking for) and go to work on the results.

Good questions worth asking within those results are:

From the target IP address, what emails are associated with it? and of those emails noted, if I search for those too as a follow-up action, what will the results show?

If you're lucky and you get email addresses you can associate with IP addresses, you may well find those email addresses in use elsewhere, and in turn have been seen on other IP addresses too, as you drill down into these you will see more data shaping a view that may reflect campaign behaviour, you may see obviously automated identities but keep digging as they'll make the non-automated ones stand out like a sore thumb

The Methodology:

- Search the IP

-

- Search the Emails

-

- Search the passwords

From Those Results

- Search the new IP addresses

-

- Search the new Emails based on the related IP addresses

-

- Search the new passwords based on the related IP Address

-

- Note any hashses / uncracked passwords, if it's important you may want to recover that password, it may be important

Repeat until exhausted

You're looking at two types of behaviours, automated and manual, lets say the IP address 12.34.56.252 has a few email addresses associated with it, [email protected],[email protected],[email protected],[email protected] etc.. and also has [email protected]

It would be worth searching your breach data for [email protected] and the automated accounts independently to see if there are other IP addresses where it's been historically seen, there will be times when you get no hits, as breach data is a gift and it's loaded with chance, but it's still worth doing, if you search those IP addresses for the automated, you may reveal more infrastructure hosting worth looking at the theme here is, they all compliment each other in offering a stronger view

The only bit missing is... those uncracked passwords arent going to crack themselves and they may well be associated to campaigns, i've observed one password across many identities, and this is easy for groups to remember (not ideal security but... off-topic)

extracting the cracked passwords to a wordlist file wouldn't hurt, but also cracking as much of the residual hashes would be useful in validating that a certain account is using a certain password or a close variation of... or another password worth searching for, because sometimes the password is unique enough to search for that to find threads too

Key Takeaways:

- Search Historical Breach Data for Intel, and exhaust it where you find it.

- Crack passwords in breach data, it may be significant or confidence compounding.

- Opsec failures - IP Addresses that look residential over proxy/anonymity/obfuscation, email addresses that look more like non-burner identities, or less-automated looking

Sometimes it works, sometimes it doesn't, it's not a heavy effort to have a quick look that might turn into a comprehensive loss of sleep.

Here's the code for Ex-Machina.py have a read, It's default is to go via local proxy, 127.0.0.1, you'll need to give it your dehashed.com API key and email, you can also set a search cap on IP and email's as recursion can explode, and that might be something you want to keep to short distances from the parental target or actually, give as much info as possible to find better pattern views. - we can chat about this, but I'm pretty sure any serious hunters will be able to just use the methodology and not so much this script, it will spit out a raw.json and a summary of associations.

import requests

import time

import json

import sys

api_endpoint = 'https://api.dehashed.com/search?query={}'

credentials = ('YOUREMAIL', 'YOURAPI')

headers = {'Accept': 'application/json'}

checked_items = {}

email_to_ips = {} # Maps each email to the set of associated IP addresses

ip_to_emails = {} # Maps each IP address to the set of associated emails

ip_limit = 10 # Limit for IP address queries

email_limit = 10 # Limit for email queries

raw_data = [] # List to store raw data

proxies = {

"http": "http://127.0.0.1:8080",

"https": "http://127.0.0.1:8080",

}

def make_request(query, query_type):

try:

time.sleep(0.33)

if query in checked_items.get(query_type, {}):

return None

else:

if len(checked_items.get(query_type, {})) >= (ip_limit if query_type == 'ip' else email_limit):

print(f'Skipped request for {query_type} {query} due to limit')

return None

checked_items.setdefault(query_type, set()).add(query)

response = requests.get(api_endpoint.format(query), auth=credentials, headers=headers, proxies=proxies, verify=False)

response.raise_for_status()

return response.json()

except requests.exceptions.RequestException as e:

print(f'Request error: {e}')

except json.JSONDecodeError as e:

print(f'JSON decode error: {e}')

except Exception as e:

print(f'Unexpected error: {e}')

def process_response(data, parent=None):

if 'entries' in data:

raw_data.extend(data['entries']) # Add the entries to the raw_data list

for entry in data['entries']:

entry['parent'] = parent # Keep track of parent

email = entry.get('email')

ip_address = entry.get('ip_address')

if email and ip_address:

email_to_ips.setdefault(email, set()).add(ip_address)

ip_to_emails.setdefault(ip_address, set()).add(email)

for field in ['email', 'ip_address']:

value = entry.get(field)

if value and (value not in checked_items.get(field, {})):

data = make_request(value, 'email' if field == 'email' else 'ip')

if data is not None:

process_response(data, parent=entry)

# Validate command line arguments

if len(sys.argv) < 2:

print('Usage: python lifesabreach.py <start>')

print('<start> must be an IP address or an email address')

sys.exit(1)

start = sys.argv[1]

query_type = 'email' if '@' in start else 'ip'

# Sample usage

data = make_request(start, query_type)

if data is not None:

process_response(data)

# Open a file to append the summary

with open('summary.txt', 'a') as f:

for email, ips in email_to_ips.items():

f.write(f'Email {email} is associated with IP addresses {ips}\n')

for ip_address, emails in ip_to_emails.items():

f.write(f'IP address {ip_address} is associated with emails {emails}\n')

# Save to JSON files

summary = {

'checked_items': {k: list(v) for k, v in checked_items.items()},

'email_to_ips': {k: list(v) for k, v in email_to_ips.items()},

'ip_to_emails': {k: list(v) for k, v in ip_to_emails.items()},

}

with open('output.json', 'w') as f:

json.dump(summary, f)

with open('raw.json', 'w') as f:

json.dump(raw_data, f)

Python3 Ex-Machina.py 12.34.56.78 or Python3 Ex-Machina.py [email protected]

If you're in a position where collaboration exists those sites that suffered the breaches may be forthcoming with more verbose information around the targets you're chasing, I can't speak to that tho ... out of my league.